Overview

Abstract

Inverse rendering with Gaussian Splatting has advanced rapidly, but accurately disentangling material properties from complex global illumination effects, particularly indirect illumination, remains a major challenge. Existing methods often query indirect radiance from Gaussian primitives pre-trained for novel-view synthesis, but these lack supervision for modeling indirect radiances from unobserved views.

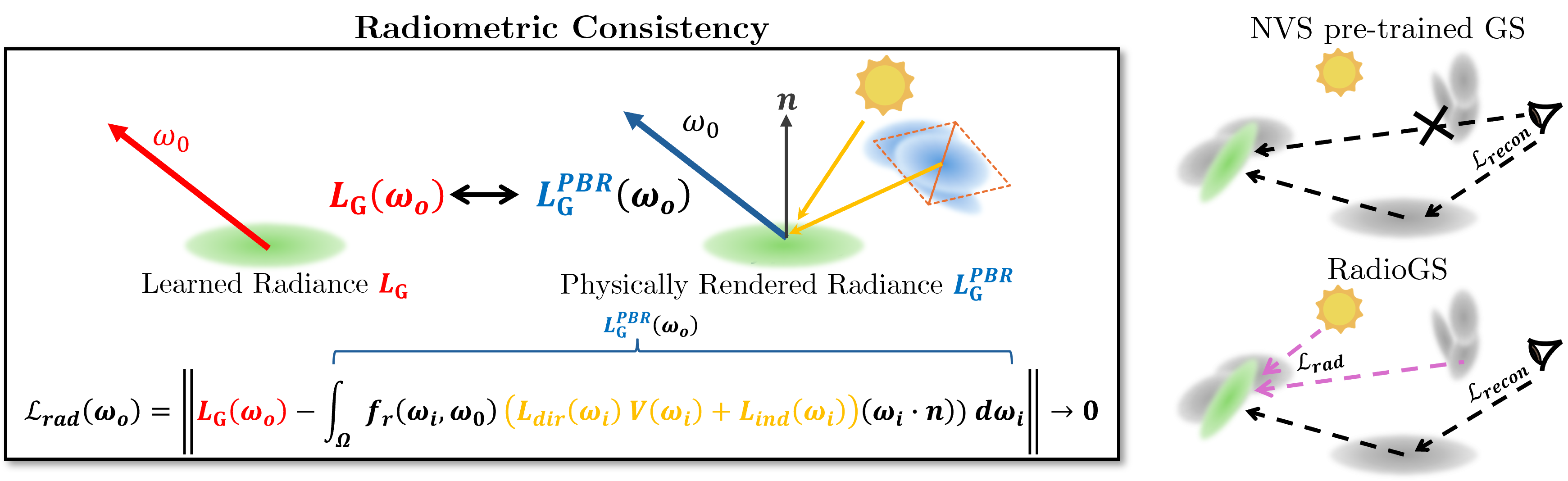

We introduce radiometric consistency loss, a novel physically-based constraint that provides supervision towards unobserved views by minimizing the residual between each Gaussian primitive's learned radiance and its physically-based rendered counterpart. This establishes a self-correcting feedback loop between physically-based rendering and novel-view synthesis, enabling accurate modeling of inter-reflection.

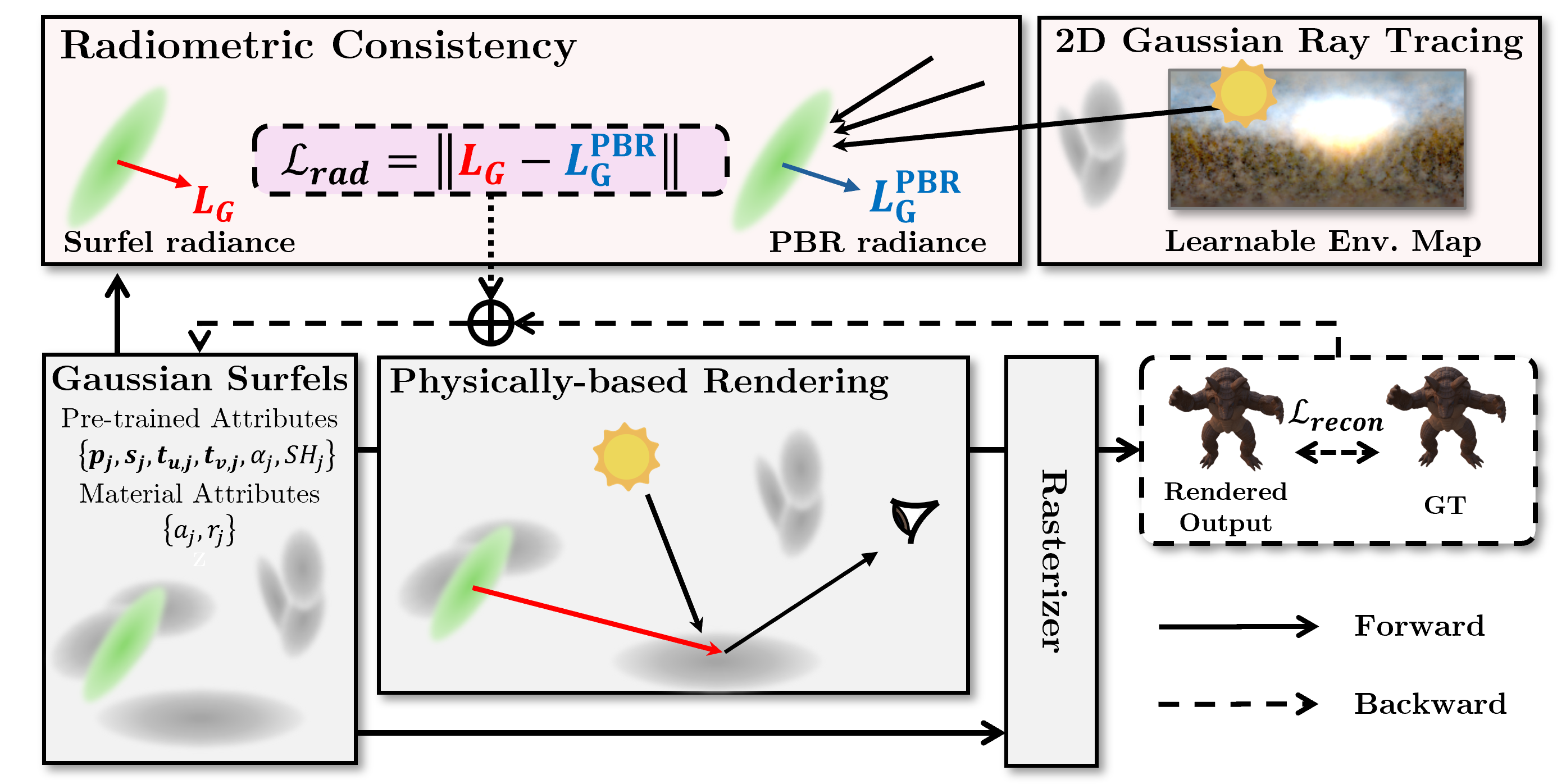

We then propose RadioGS, an inverse rendering framework that efficiently integrates radiometric consistency using Gaussian surfels and 2D Gaussian ray tracing. We further introduce a finetuning-based relighting strategy that adapts Gaussian surfel radiances to new illuminations within minutes, achieving low rendering cost (<10ms). Extensive experiments show that RadioGS outperforms existing Gaussian-based methods while retaining computational efficiency.

Methods

Radiometric Consistency

- A novel physically-based constraint that guides Gaussian surfels to self-correct their radiance by enforcing consistency between learned surfel radiance and physically-based rendered radiance for unobserved viewpoints.

RadioGS

- An inverse rendering framework that efficiently integrates radiometric consistency using Gaussian surfels and differentiable 2D Gaussian ray tracing. Our framework provides a self-correcting feedback loop between physically-based rendering and novel-view synthesis, enabling accurate modeling of inter-reflection for inverse rendering.

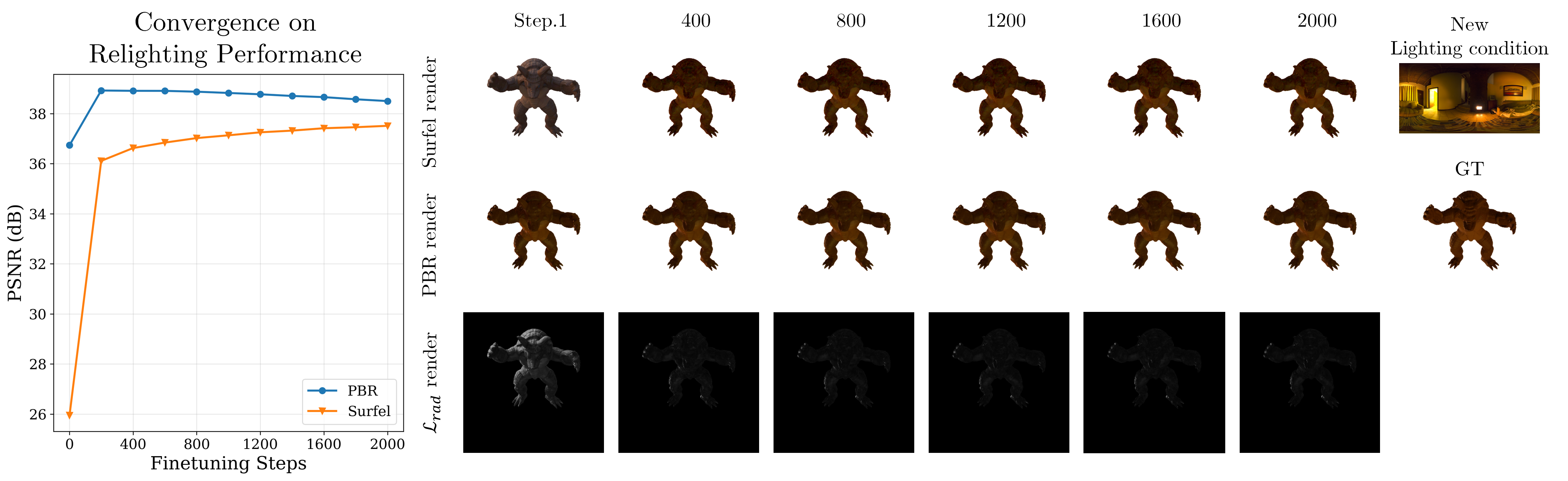

Efficient Relighting

- Our finetuning-based relighting approach adapts surfel radiances to new lighting condition by enforcing radiometric consistency with physically-based radiance, leading to fast convergence (~2 minutes). This enables real-time rendering (<10ms per frame) by directly rasterizing Gaussian surfels.

Results

Lego Scene Qualitative Results

- RadioGS shows enhanced decomposition, robust performance on geometrically complex regions, and realistic relighting results. Click the buttons above to switch components.

Indirect Illumination Modeling Comparison

- We made our custom dataset based on TensoIR to obtain ground truth (GT) indirect illumination. RadioGS provides realistic and detailed indirect illumination, while IRGS overestimates and SVG-IR underestimates intensity. Click the buttons above to switch scenes.

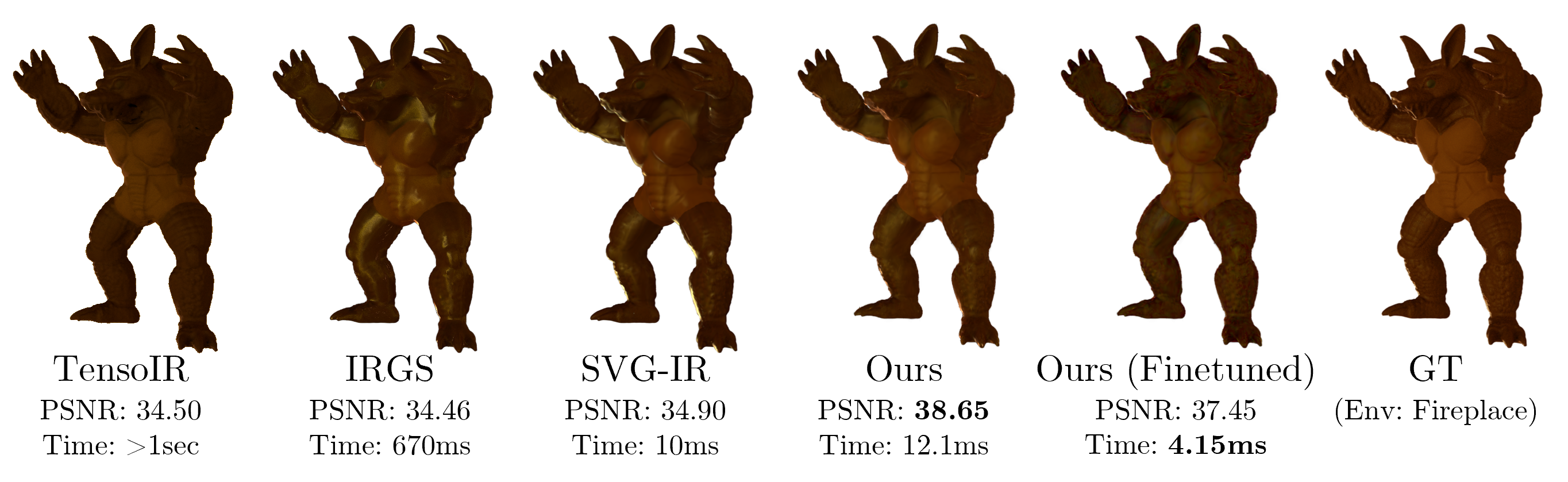

Relighting Performance and Rendering Cost

- Relighting on “armadillo” scene with fireplace environment showing realistic results and real-time rendering capability on new lighting conditions.

Citation

@inproceedings{han2026radiogs,

title={RadioGS: Radiometrically Consistent Gaussian Surfels for Inverse Rendering},

author={Han, Kyu Beom and Kim, Jaeyoon and Kim, Woo Jae and Seo, Jinhwan and Yoon, Sung-Eui},

booktitle={International Conference on Learning Representations (ICLR)},

year={2026}

}